In this paper, we teach an AI the concept of metallic, translucent materials and more. The newly synthesized materials can be visualized in real-time via neural rendering and we also propose an intuitive variant generation technique to enable the user to fine-tune these recommended materials. This project took more than 3000 work hours to complete – I hope you’ll enjoy the result!

Note: This does neural rendering too!

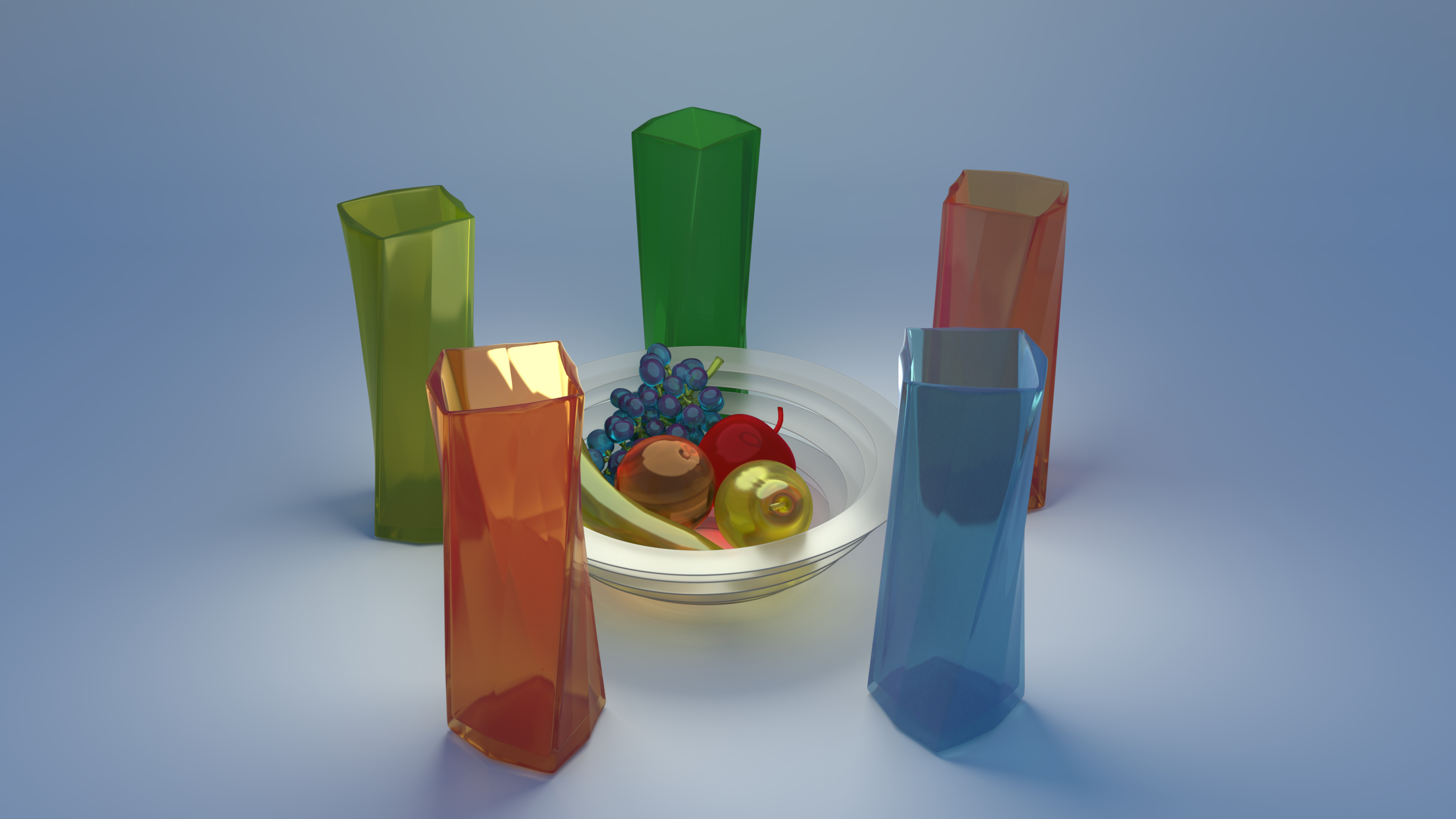

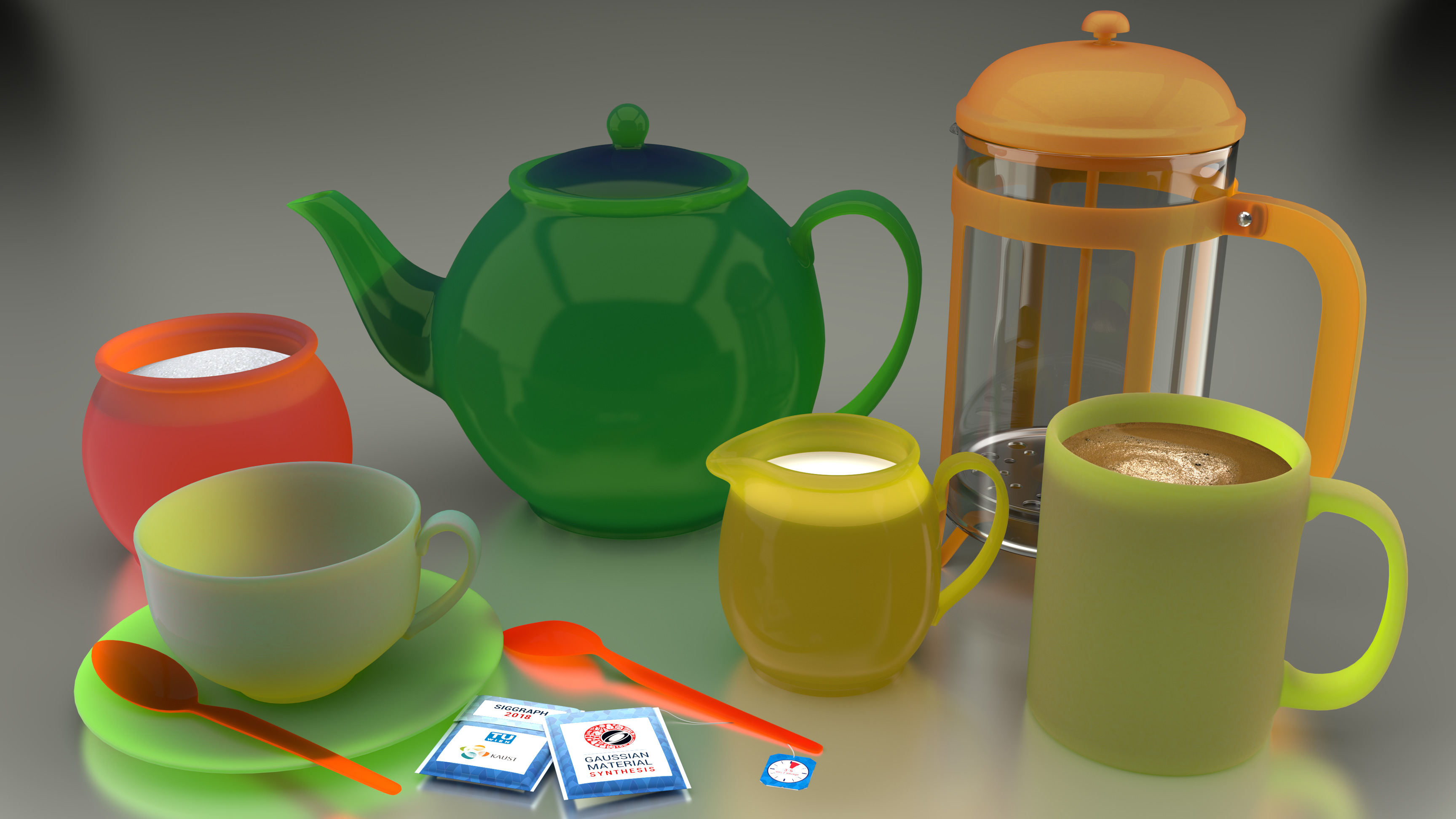

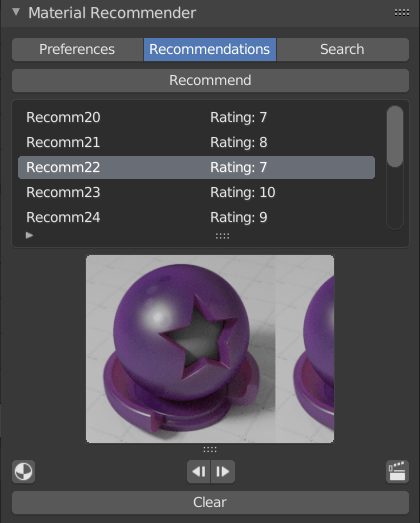

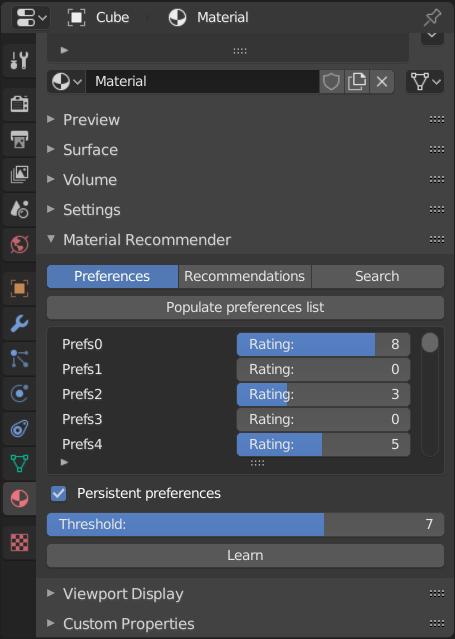

We present a learning-based system for rapid mass-scale material synthesis that is useful for novice and expert users alike. The user preferences are learned via Gaussian Process Regression and can be easily sampled for new recommendations. Typically, each recommendation takes 40-60 seconds to render with global illumination, which makes this process impracticable for real-world workflows. Our neural network eliminates this bottleneck by providing high-quality image predictions in real time, after which it is possible to pick the desired materials from a gallery and assign them to a scene in an intuitive manner. Workflow timings against Disney’s “principled” shader reveal that our system scales well with the number of sought materials, thus empowering even novice users to generate hundreds of high-quality material models without any expertise in material modeling. Similarly, expert users experience a significant decrease in the total modeling time when populating a scene with materials. Furthermore, our proposed solution also offers controllable recommendations and a novel latent space variant generation step to enable the real-time fine-tuning of materials without requiring any domain expertise.

Keywords: neural networks, photorealistic rendering, neural rendering, gaussian process regression, latent variables, gaussian material synthesis

This paper is under the permissive CC-BY license. The source code is under the even more permissive MIT license. Feel free to reuse the materials and hack away at the code! If you built something on top of this, please drop me a message – I’d love to see where others take these ideas and will leave links to the best ones here.

If you experience any issues running it, have a look at these workarounds. If you have played with the code and have any comments that could help others pick this up, please let me know, I’ll be happy to add it here. Thank you! The publisher’s version is available here.

Changelog:

2018/05/07 – Added a material loader scene to the supplementary source code to ease the process of importing the recommended materials into your Blender scenes. Make sure to read the README file!

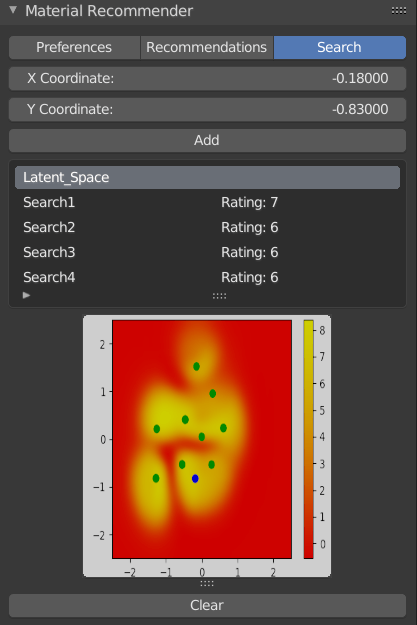

2019/09/24 – This work has been implemented as a Blender add-on! Thank you so much Vlad Vrabie! Give it a try. This is what it looks like:

We would like to thank Robin Marin for the material test scene and Vlad Miller for his help with geometry modeling, Felícia Zsolnai-Fehér for improving the design of many figures, Hiroyuki Sakai, Christian Freude, Johannes Unterguggenberger, Pranav Shyam and Minh Dang for their useful comments, and Silvana Podaras for her help with a previous version of this work. We also thank NVIDIA for providing the GPU used to train our neural networks. This work was partially funded by Austrian Science Fund (FWF), project number P27974. Scene and geometry credits: Gold Bars – JohnsonMartin, Christmas Ornaments – oenvoyage, Banana – sgamusse, Bowl – metalix, Grapes – PickleJones, Glass Fruits – BobReed64, Ice cream – b2przemo, Vases – Technausea, Break Time – Jay-Artist, Wrecking Ball – floydkids, Italian Still Life – aXel, Microplanet – marekv, Microplanet vegetation – macio.

@article{zsolnaifeher18gms,

author = {K{\'{a}}roly Zsolnai{-}Feh{\'{e}}r and

Peter Wonka and

Michael Wimmer},

title = {Gaussian material synthesis},

journal = {{ACM} Trans. Graph.},

volume = {37},

number = {4},

pages = {76:1--76:14},

year = {2018},

url = {https://doi.org/10.1145/3197517.3201307},

doi = {10.1145/3197517.3201307},

timestamp = {Wed, 21 Nov 2018 12:44:28 +0100},

biburl = {https://dblp.org/rec/bib/journals/tog/Zsolnai-FeherWW18},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

Or, if you experience any issues with this bibtex record, look here or here.

- This work has appeared in BlenderNation. Thank you!

- It has been showcased in the SIGGRAPH 2018 Technical Papers Teaser video. Huge honor, thank you so much!

- We are showcased in the TU Wien Press.

- Samuel Hatin wrote a nice summary of this paper. Thank you!

- Out of more than 120 articles, our teaser image was used as the cover art for ACM Transactions on Graphics, Volume 37 Issue 4, August 2018. See it here or here in original or below.